AI Machine Learning Model for Issues Detection in Solar Panels

Built an AI model to detect defects in solar panels from Electroluminescence (EL) processing during production-line quality inspection at Tindo Solar, South Australia.

The model was built in Python using Ultralytics YOLOv11, Pillow for image handling, and Tkinter for the prototype user interface.

Context: Tindo Solar · Manufacturing quality inspection · Machine Learning · Ultralytics YOLO

Date: February 2026

Introduction

While working at Tindo Solar as a machine operator from December 2025, I have proposed and had the opportunity to participate in a project focused on using AI to detect defects in solar panels using Electroluminescence (EL) images.

What is EL? Electroluminescence (EL) is a non-destructive testing method in which an electrical current is applied to a solar panel, causing its cells to emit infrared light. This emission is captured by a specialised camera, allowing internal defects to be identified even when they are not visible.

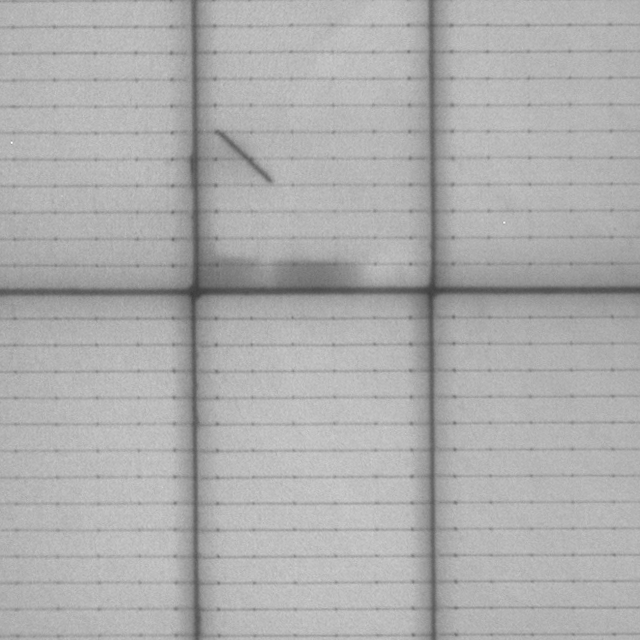

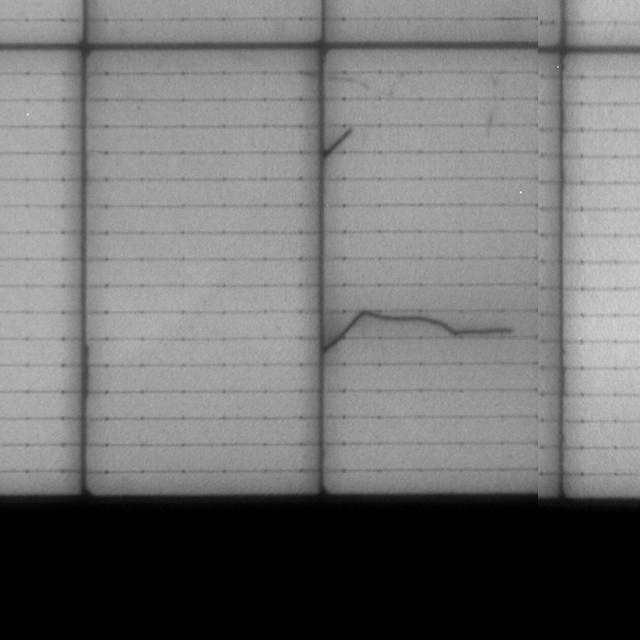

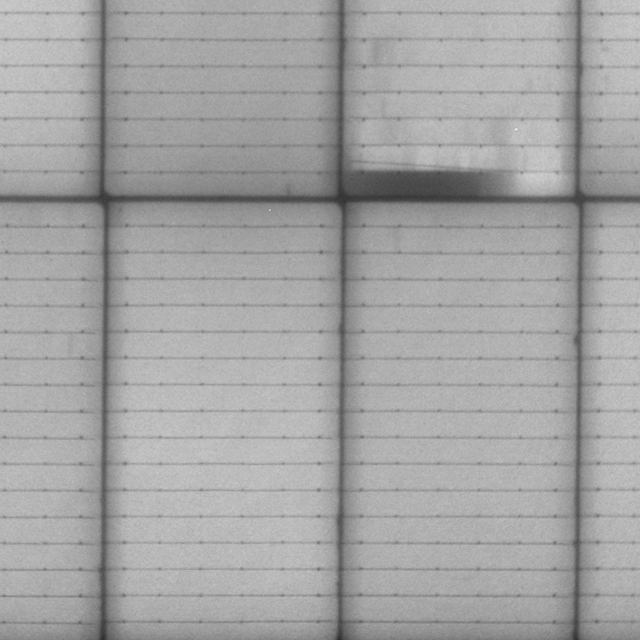

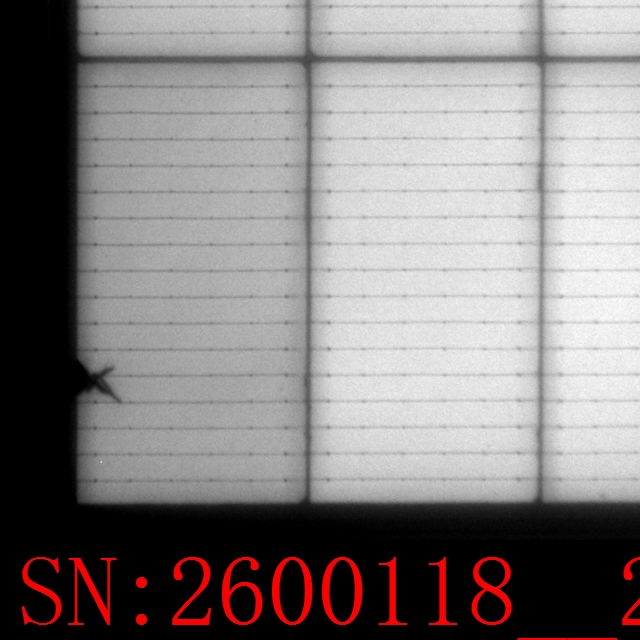

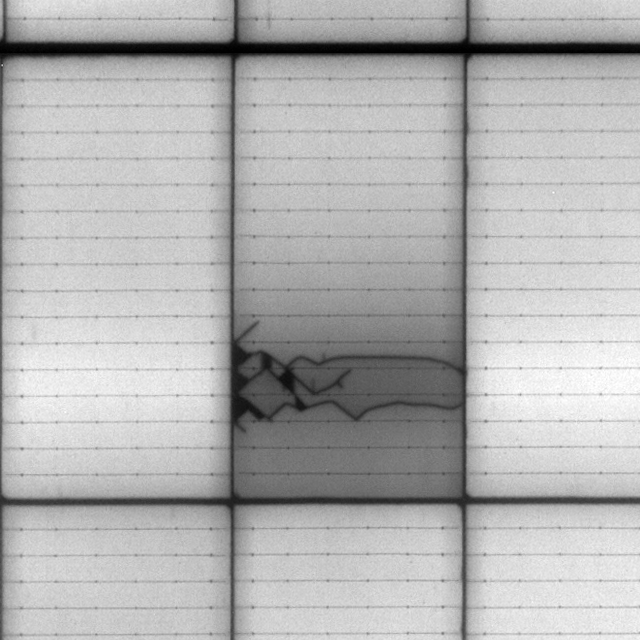

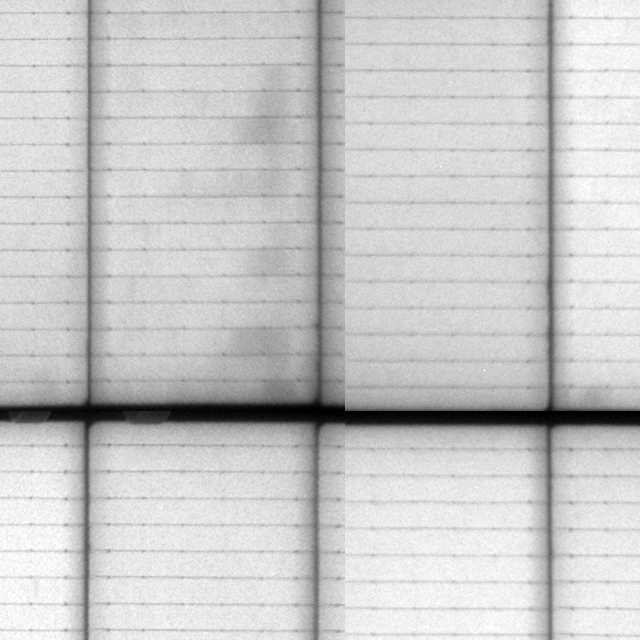

EL imaging can reveal several types of defects, with the most common being:

- Cracks: affecting performance and durability

- Soldering issues: disrupting electrical connections

- Scratches: degrading surface quality and efficiency

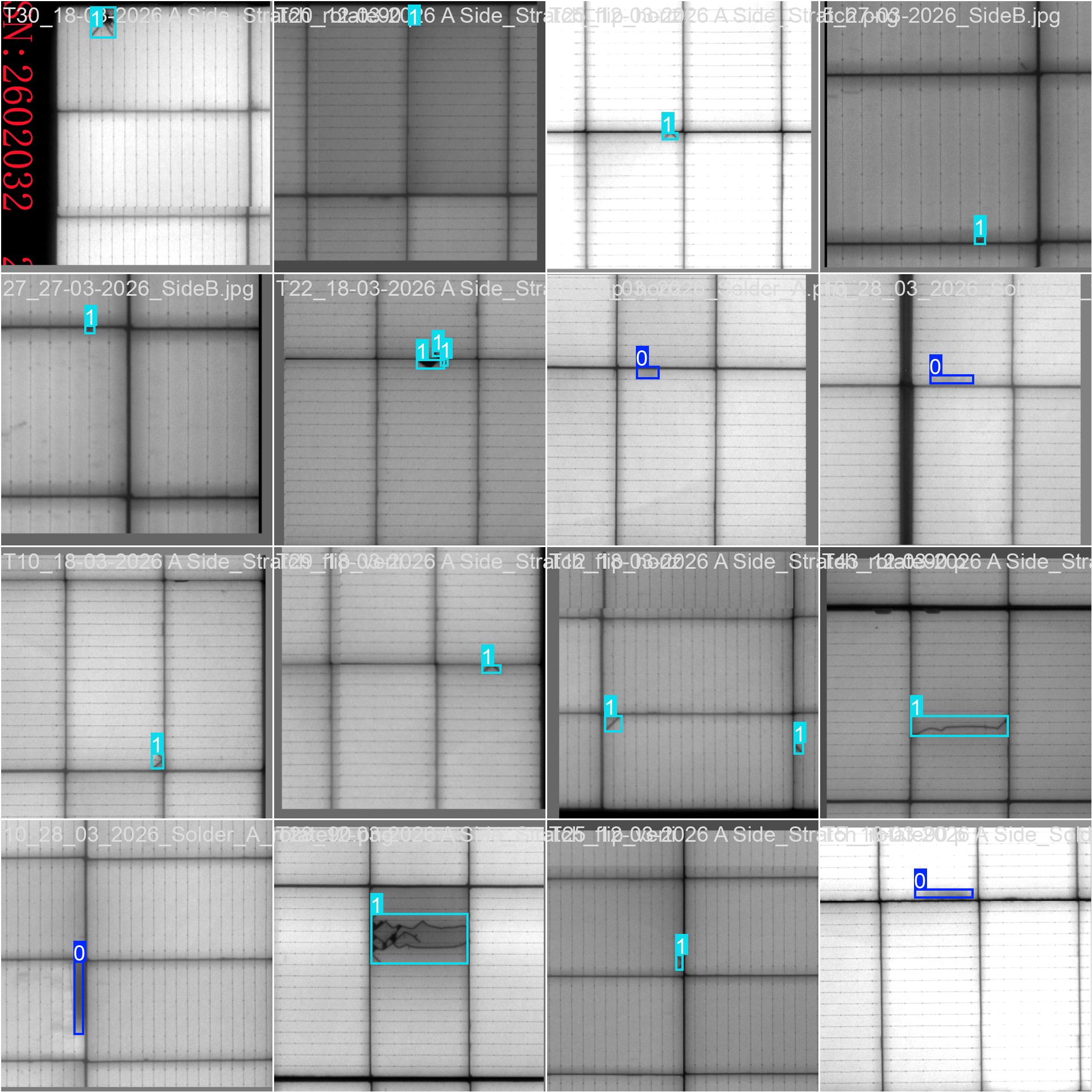

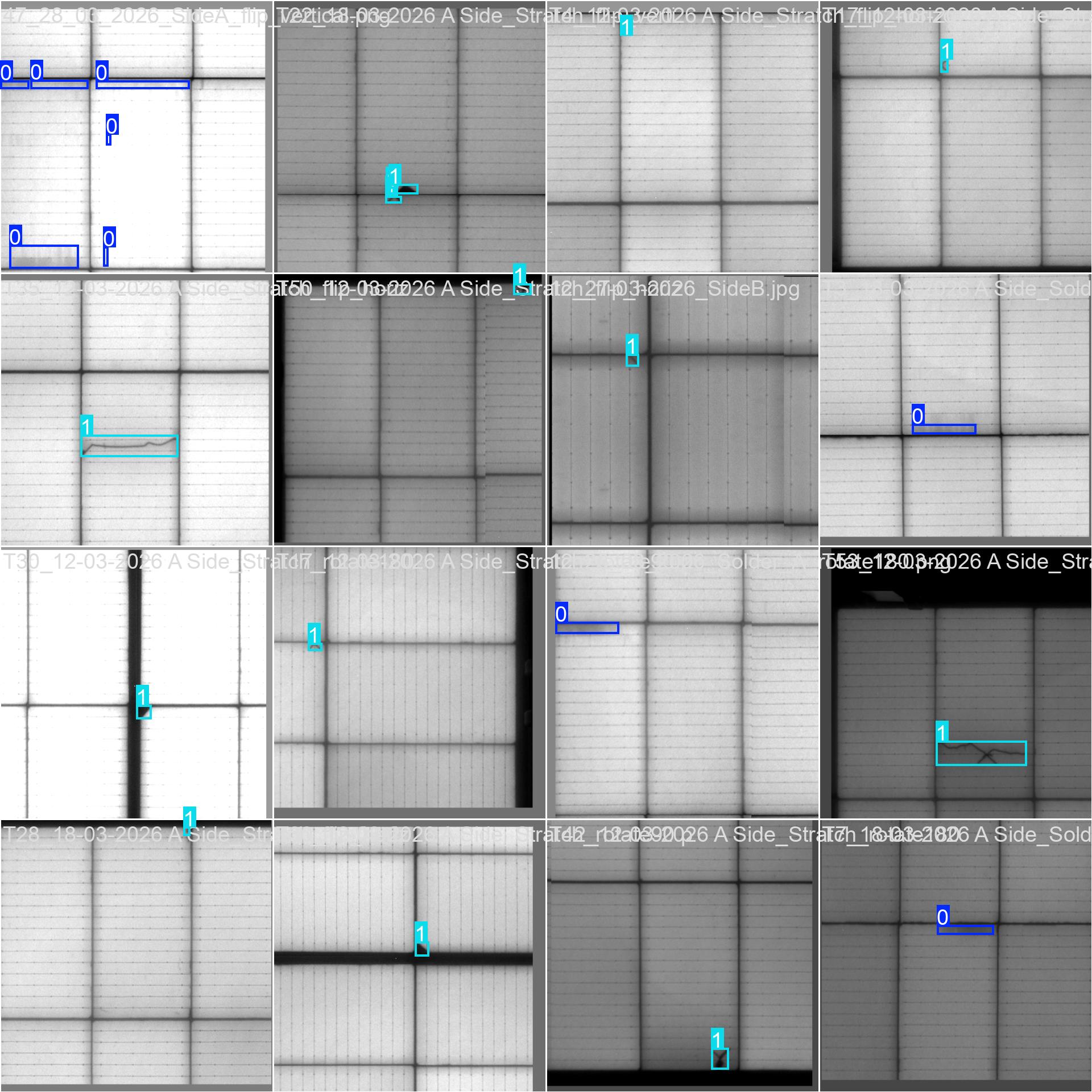

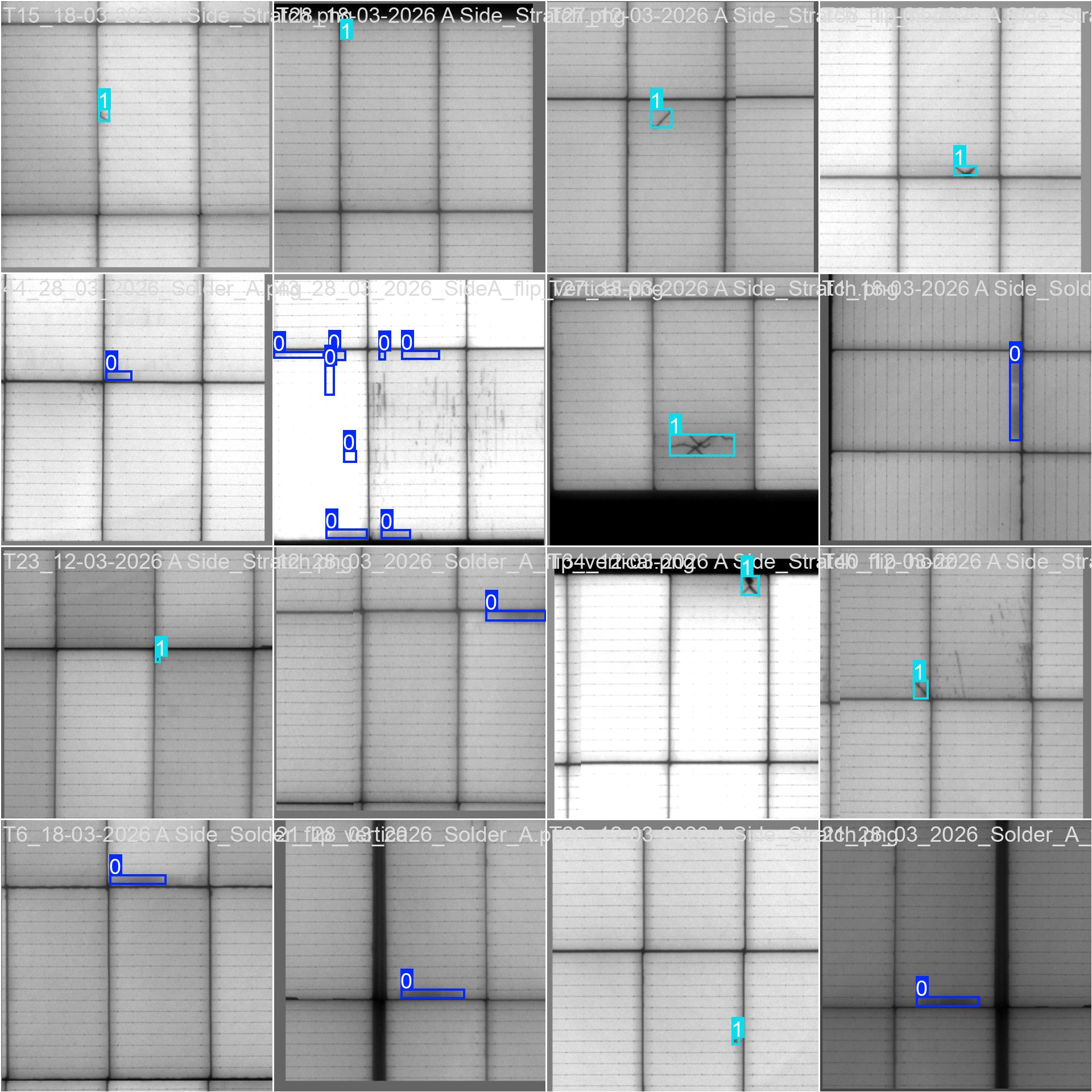

Illustrations of issues found in scanned images from the Electroluminescence (EL) inspection process.

In practice, the inspection process heavily relies on human observation, requiring technicians to analyse hundreds of images daily. This approach is time-consuming and prone to errors due to subjectivity and fatigue.

To address these limitations, a Machine Learning-based solution was proposed to automate defect detection from EL images. The goal is to develop a model capable of accurately and efficiently identifying defects, thereby reducing inspection time, improving productivity, and supporting technicians in decision-making.

This project demonstrates the potential of applying artificial intelligence to enhance quality inspection processes in industrial environments.

System Architecture and Implementation

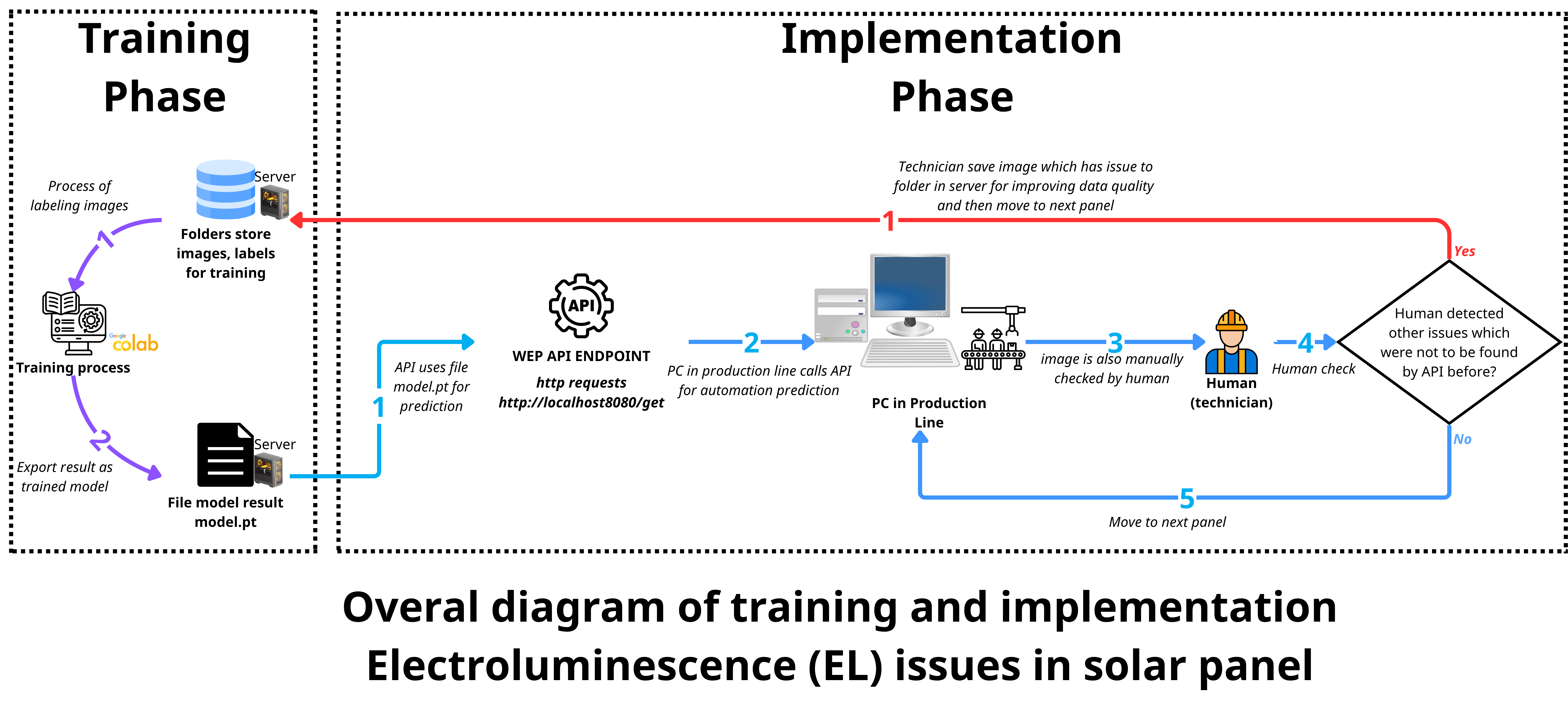

The project is organized into two main phases: the training phase and the implementation phase, forming a complete pipeline from data preparation to real-world deployment.

Overall diagram of training phase and implementation phase

1. Training Phase

The training phase focuses on preparing data and building the machine learning model:

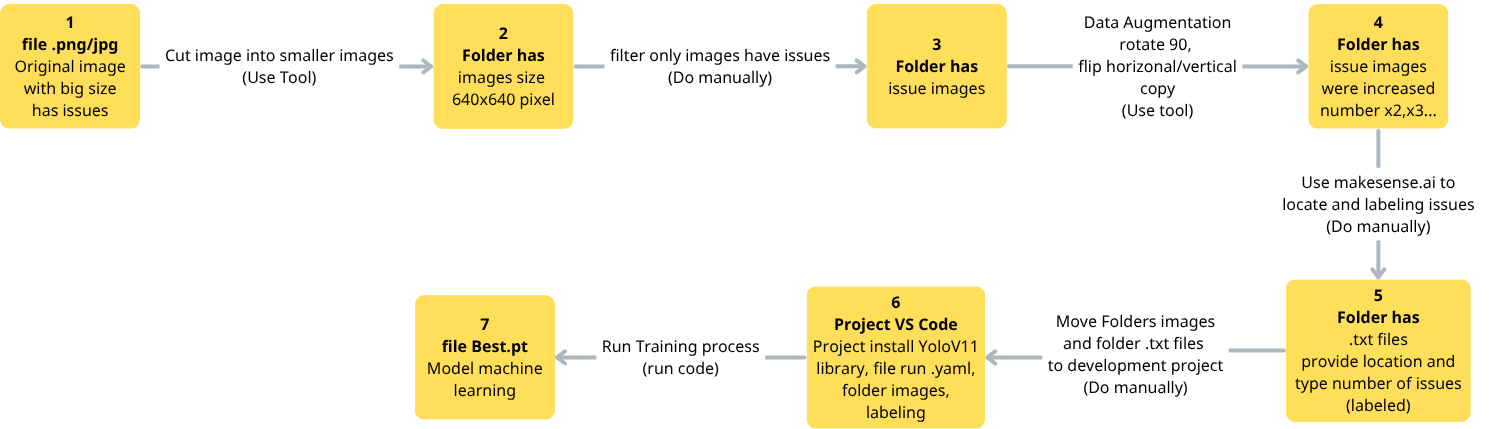

Training workflow: from data, labeling, and data augmentation to model training.

- Data Acquisition & Preprocessing — High-resolution EL images (e.g., 5336×10856 and 4796×2868 pixels) were captured from EL inspection machines. These images were divided into smaller patches of 640×640 pixels to match model input requirements.

- Data Annotation — Technicians manually reviewed images and identified defects. They also labeled images with locations of issues and a class (crack, soldering issue, scratch).

- Data Augmentation — Techniques such as rotation (90°, 180°), flipping, and blurring were applied to improve dataset diversity and model generalization.

- Dataset Splitting — The dataset was split into 80% training and 20% validation sets. A YAML file was created for configuration.

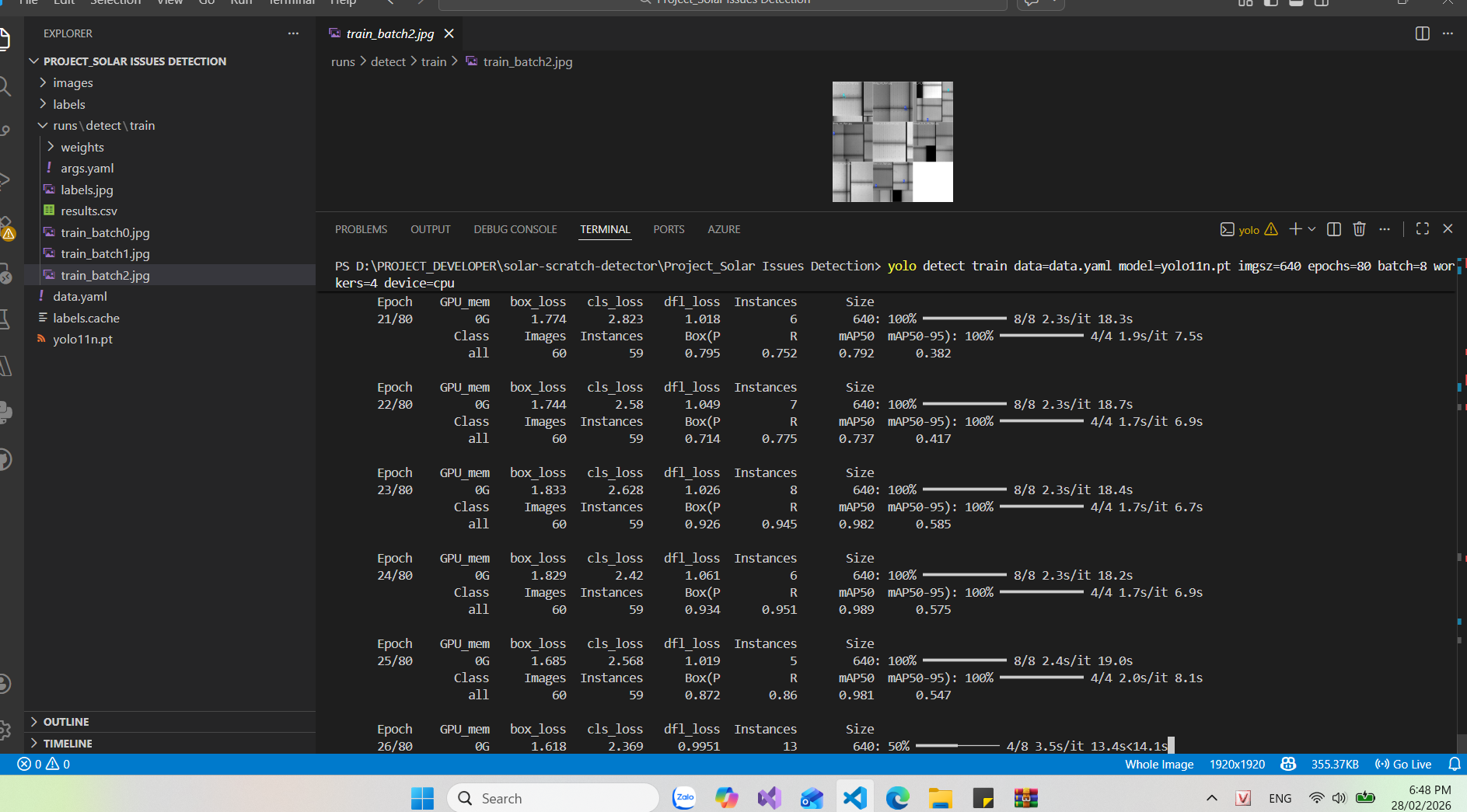

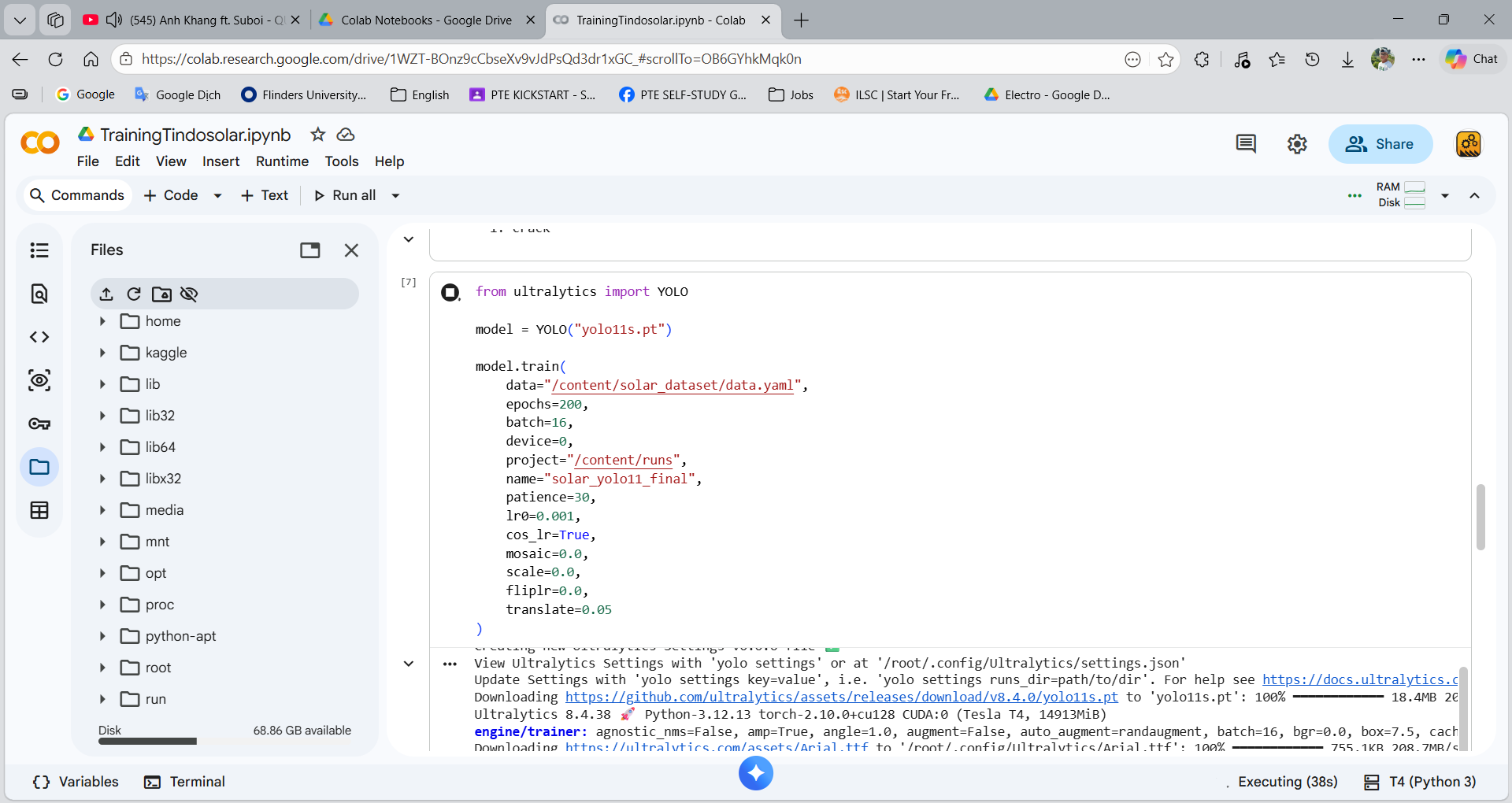

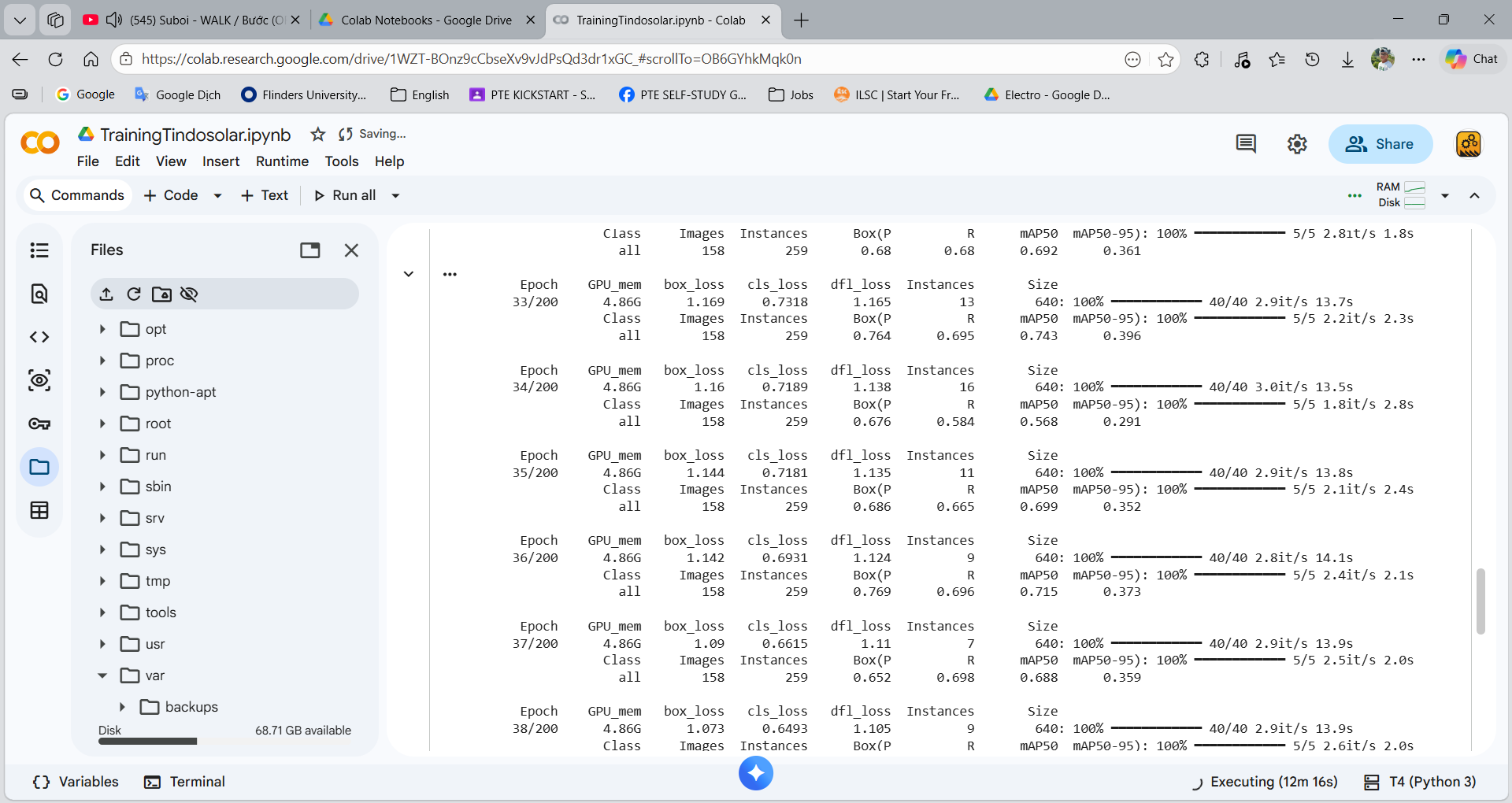

- Model Training — The model was trained using YOLOv11 small version with Python, Visual Studio Code, and Google Colab.

Using Visual Studio Code for training process.

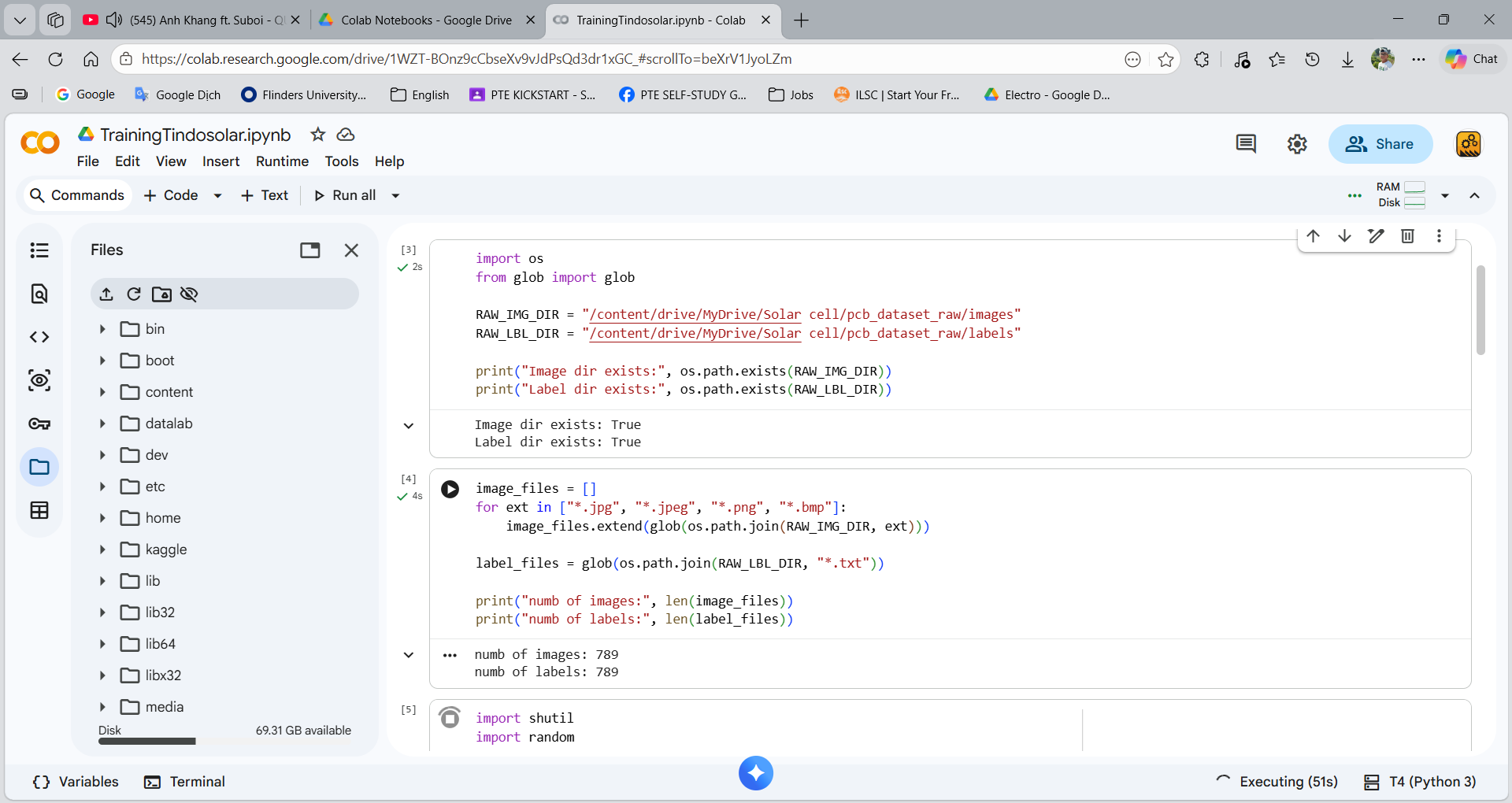

Using Google Colab for training process: storing data in Google Drive.

Using Google Colab for training process: Setup the model and configuration before training.

Using Google Colab for training process: Monitoring training process.

Model Output — The trained model weights were saved locally for deployment.

Training Batches — Augmented image batches (Mosaic, flip, HSV) used for training.

2. Implementation Phase

This phase focuses on deploying the trained model into the production environment:

API Development

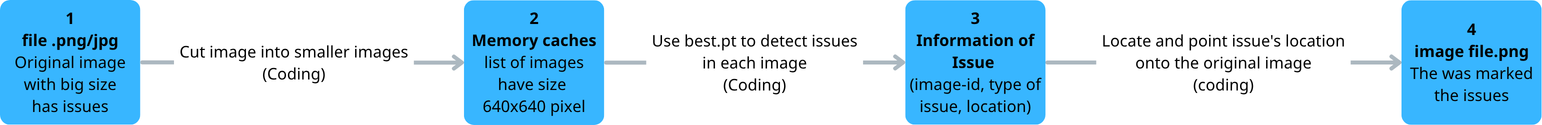

API pipeline: split images into patches, run model inference, return JSON results.

- Input images are split into 640×640 patches and stored in memory using Pillow library for image reading, cropping, drawing bounding boxes

- The trained model detects defects on each patch

- Results are returned in JSON format (bounding box + class + confidence)

- Visualization — Detected defects are displayed by drawing bounding boxes and labels on the original image.

- System Integration — The API is integrated into the production line. Each EL scan automatically triggers defect detection.

- Human-in-the-loop Verification — The AI system supports technicians, who still perform manual verification.

- Continuous Improvement — New or misclassified samples are stored for future retraining to improve the model.

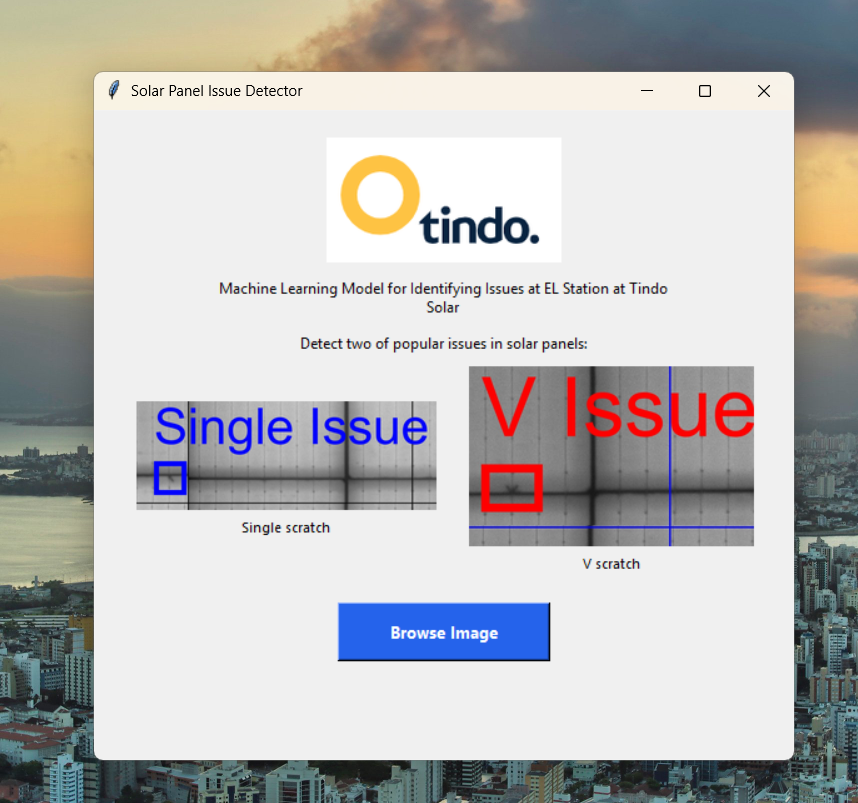

Prototype — Video Demos

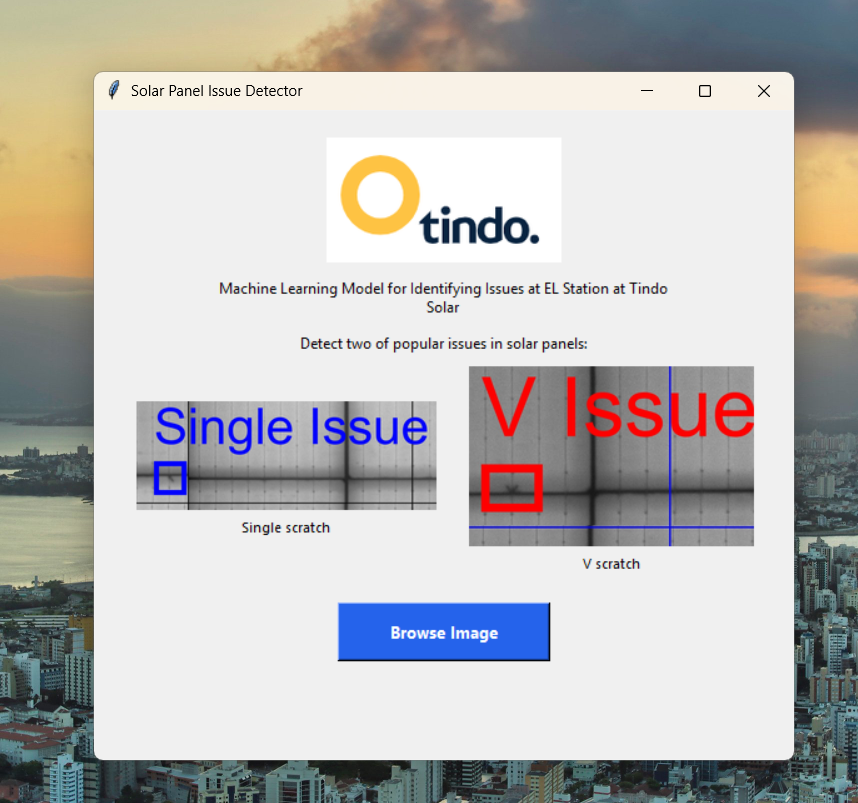

Application Interface

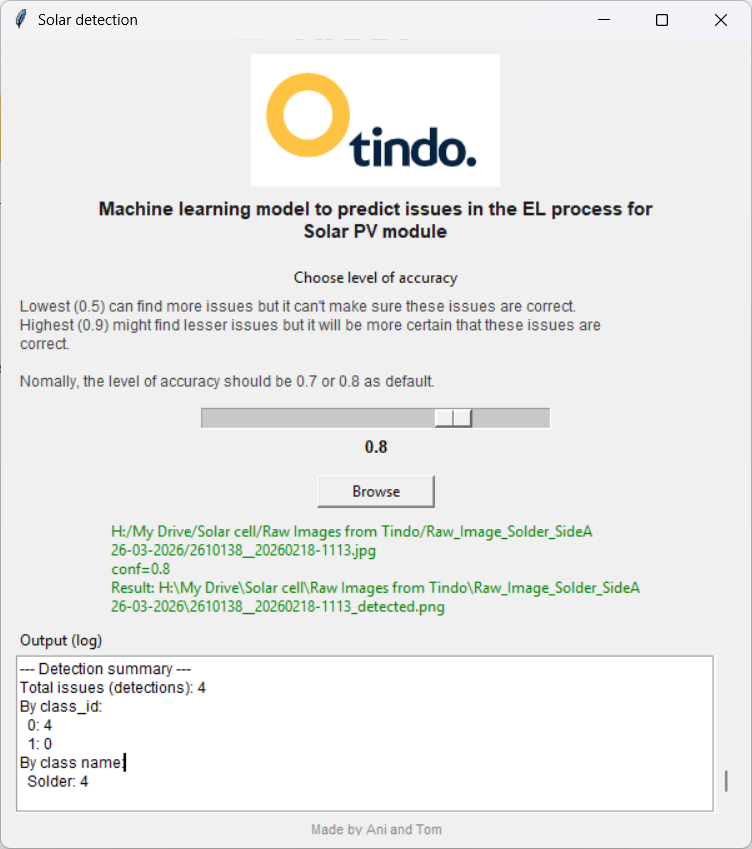

Graphic User interface for detecting.

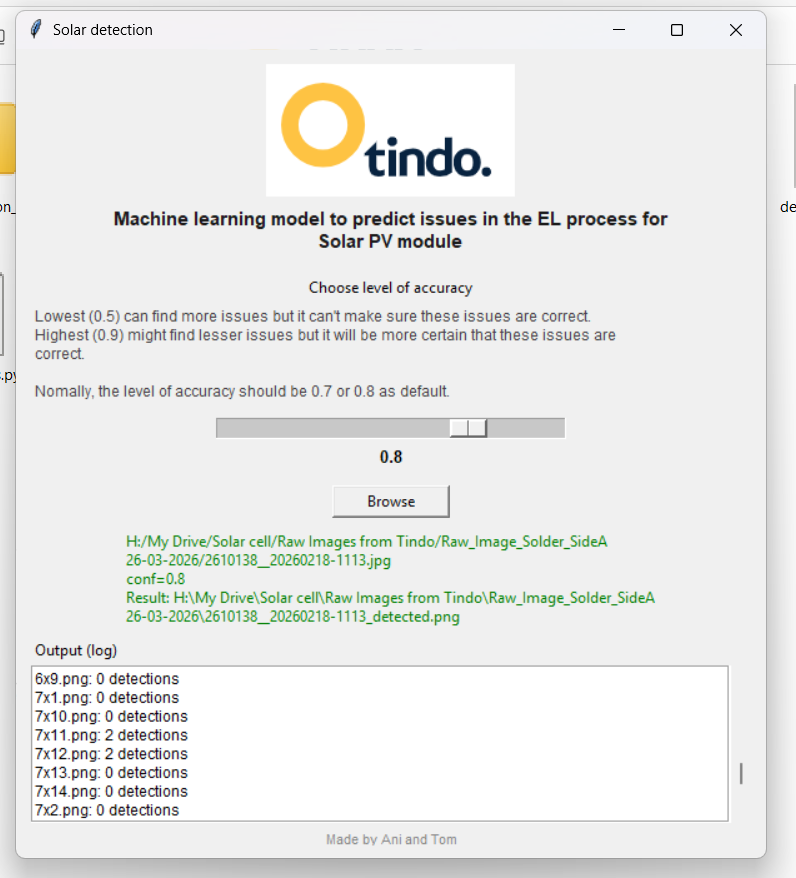

Graphic User interface: changing confidence value and results.

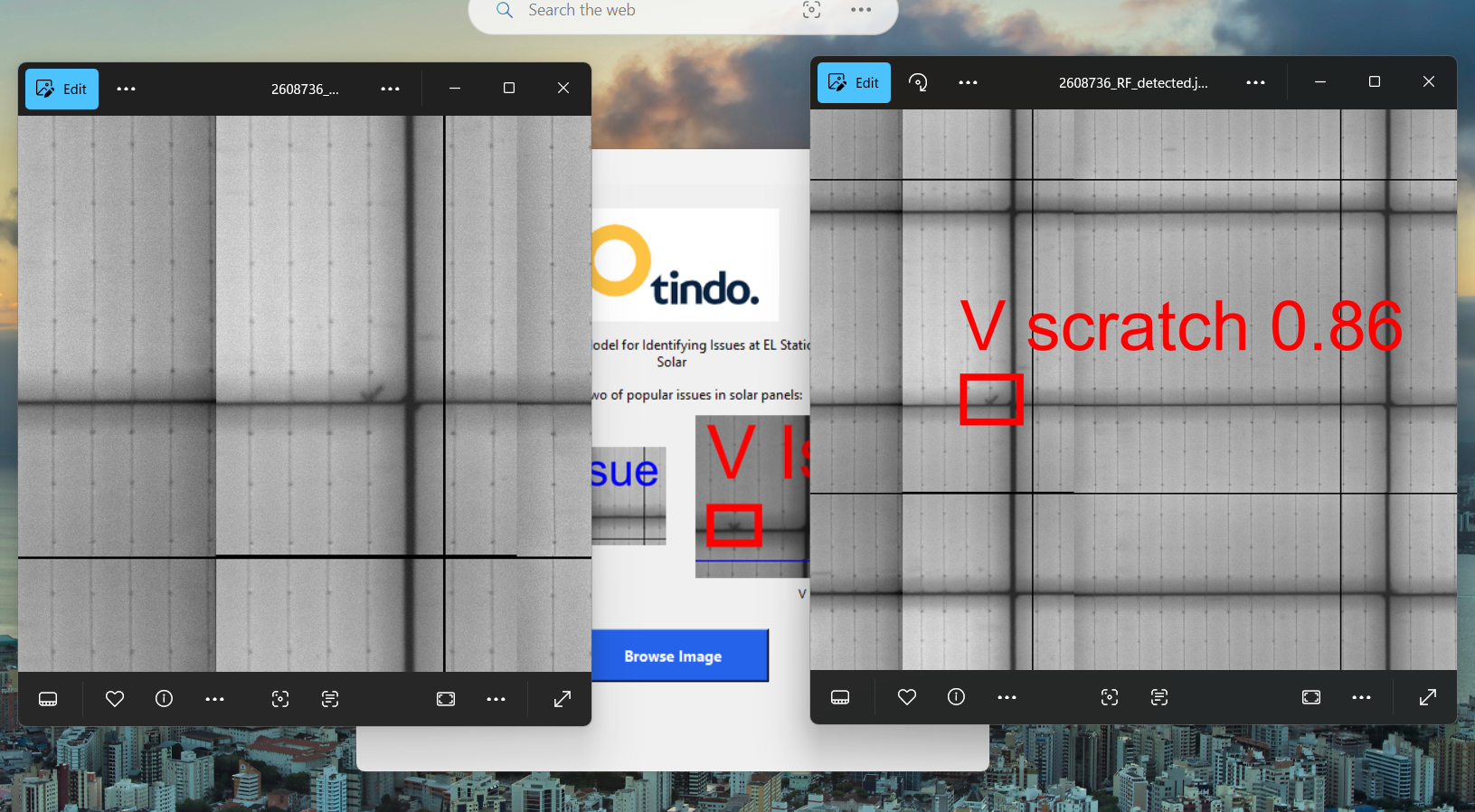

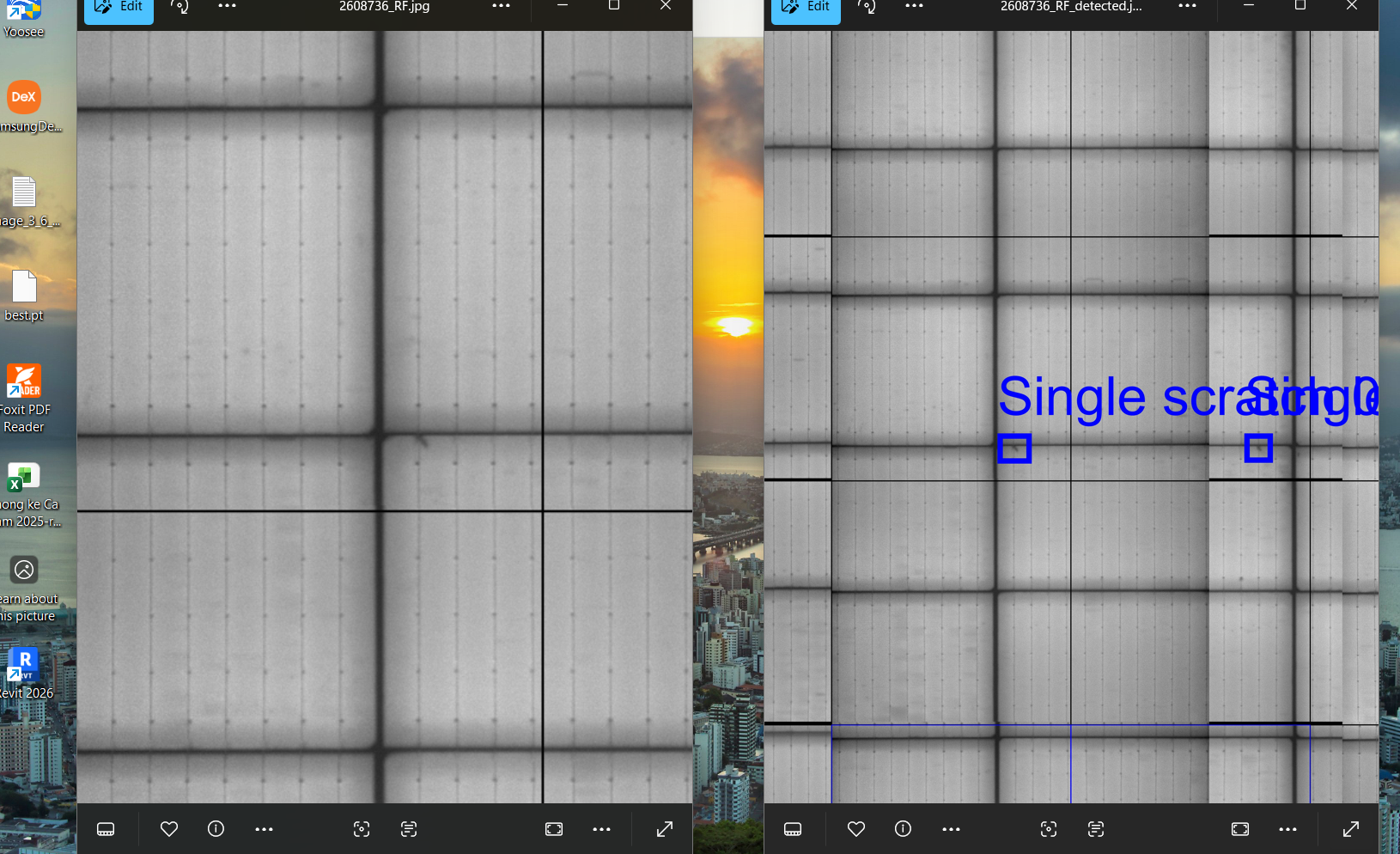

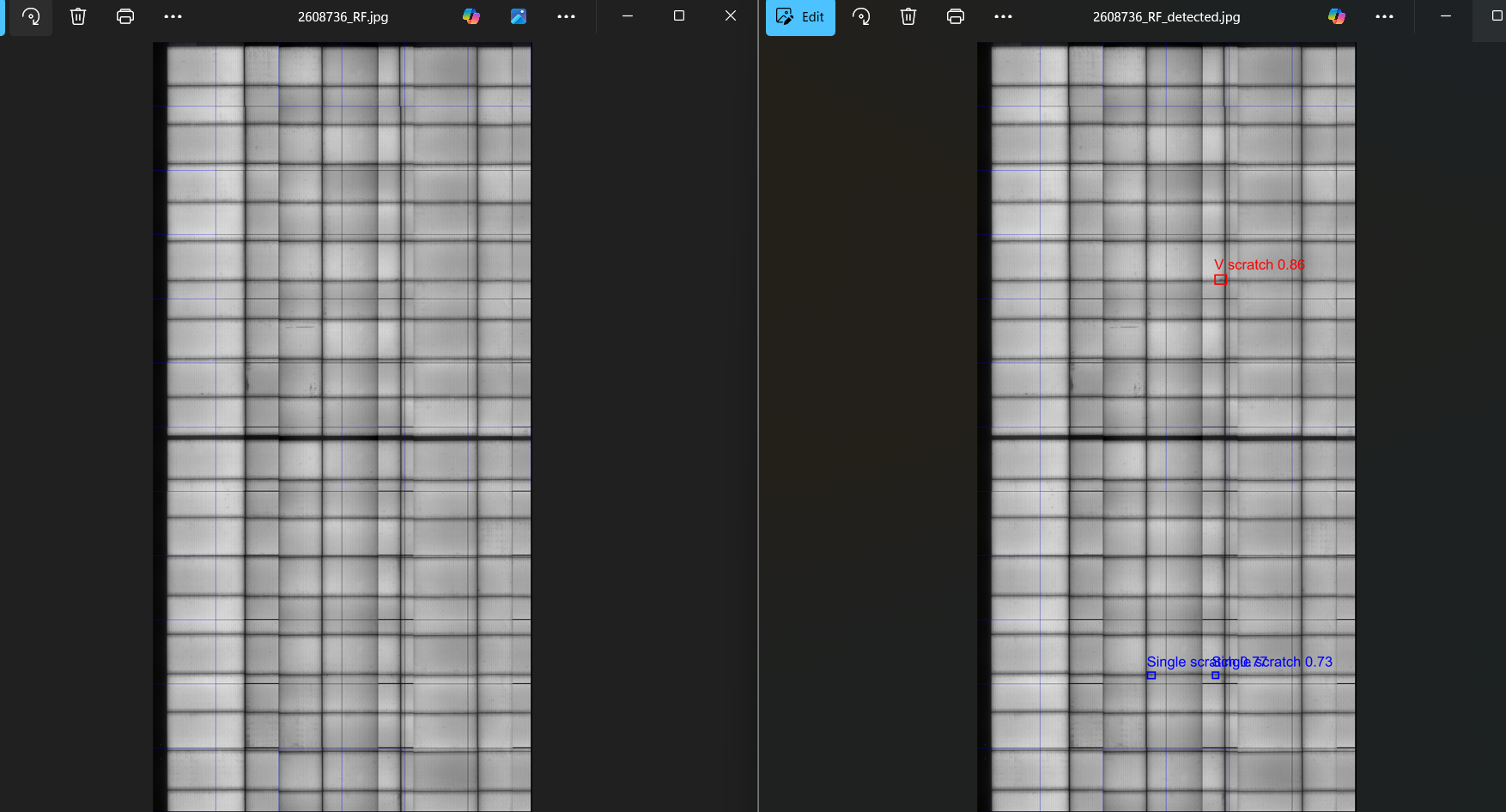

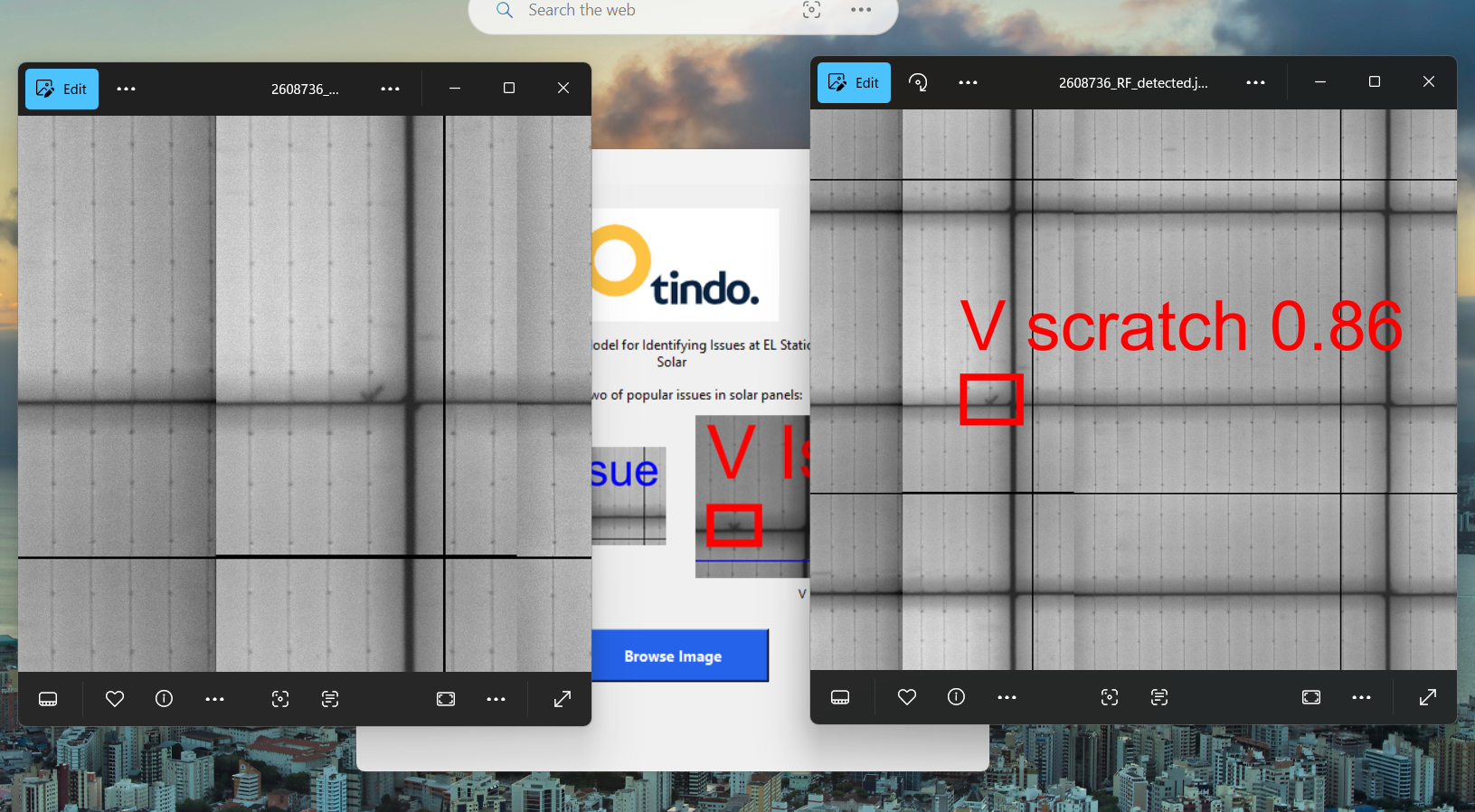

V Scratch issue was detected

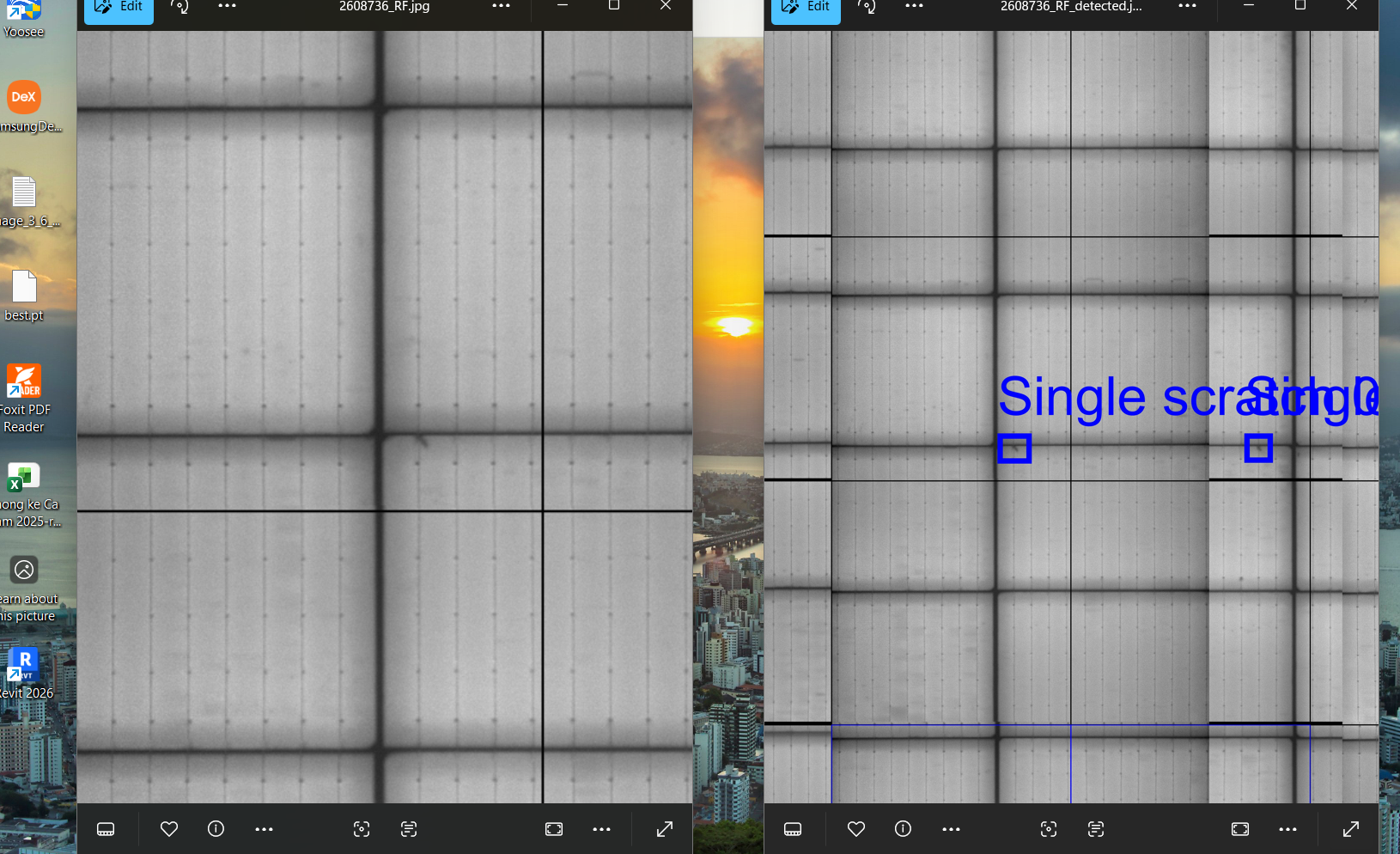

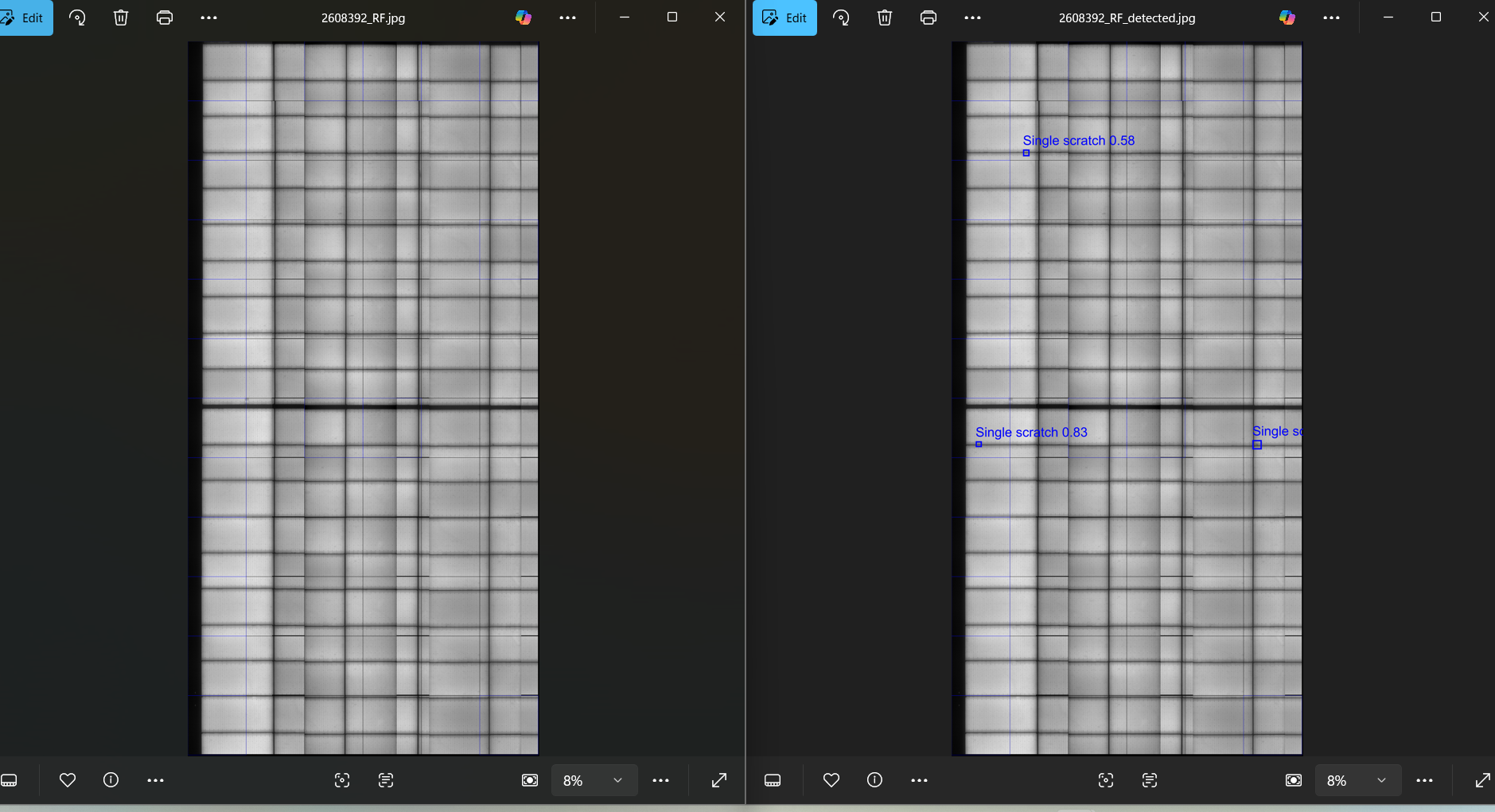

Single Scratch issue was detected

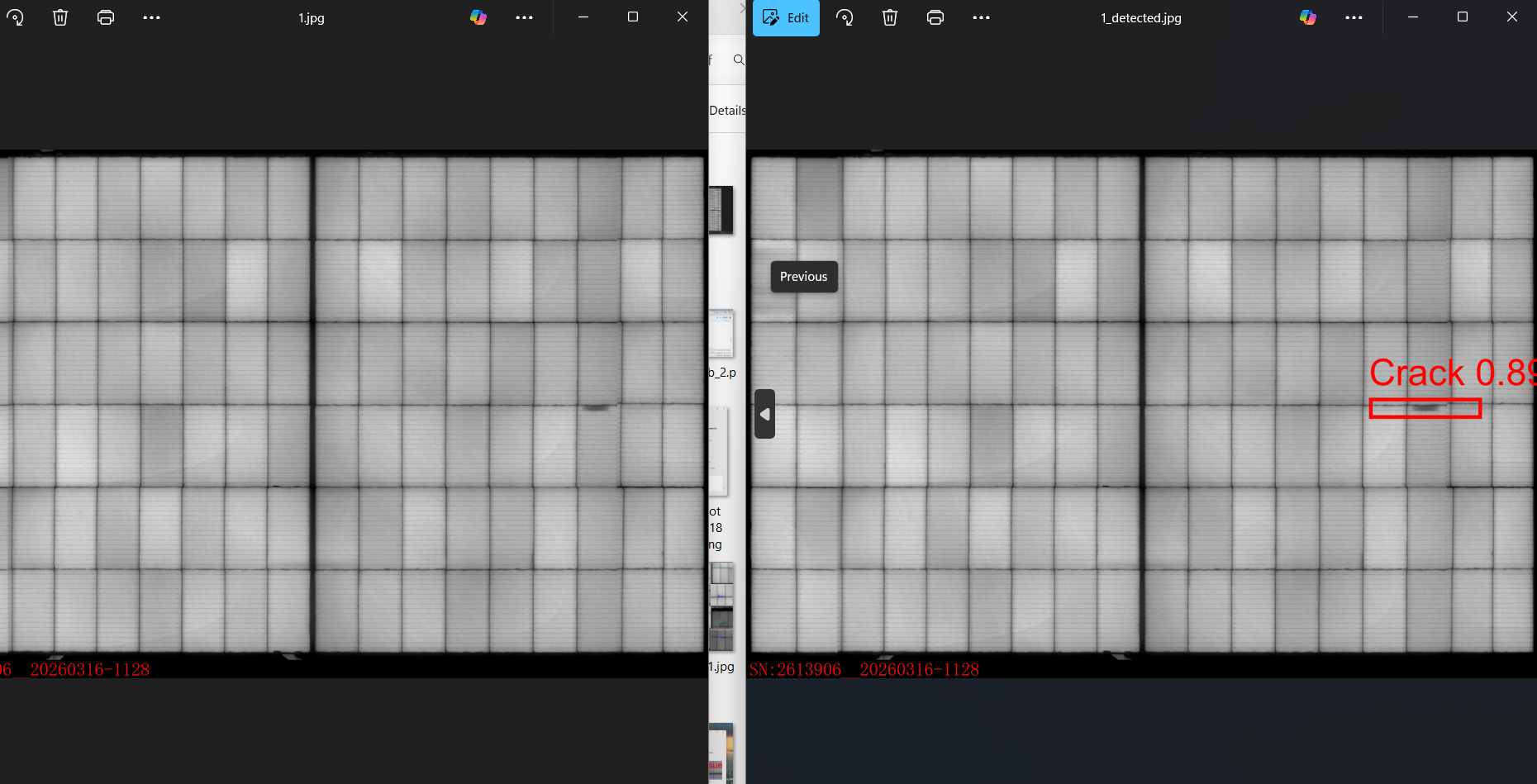

Solder issues was detected and marked by red rectangle

Scratch issues was detected and marked by blue rectangle

Issues were detected and marked through the trained model

Different issues were detected as red and blue color

The confidence 0.85 which is more accuracy

Result & Conclusion

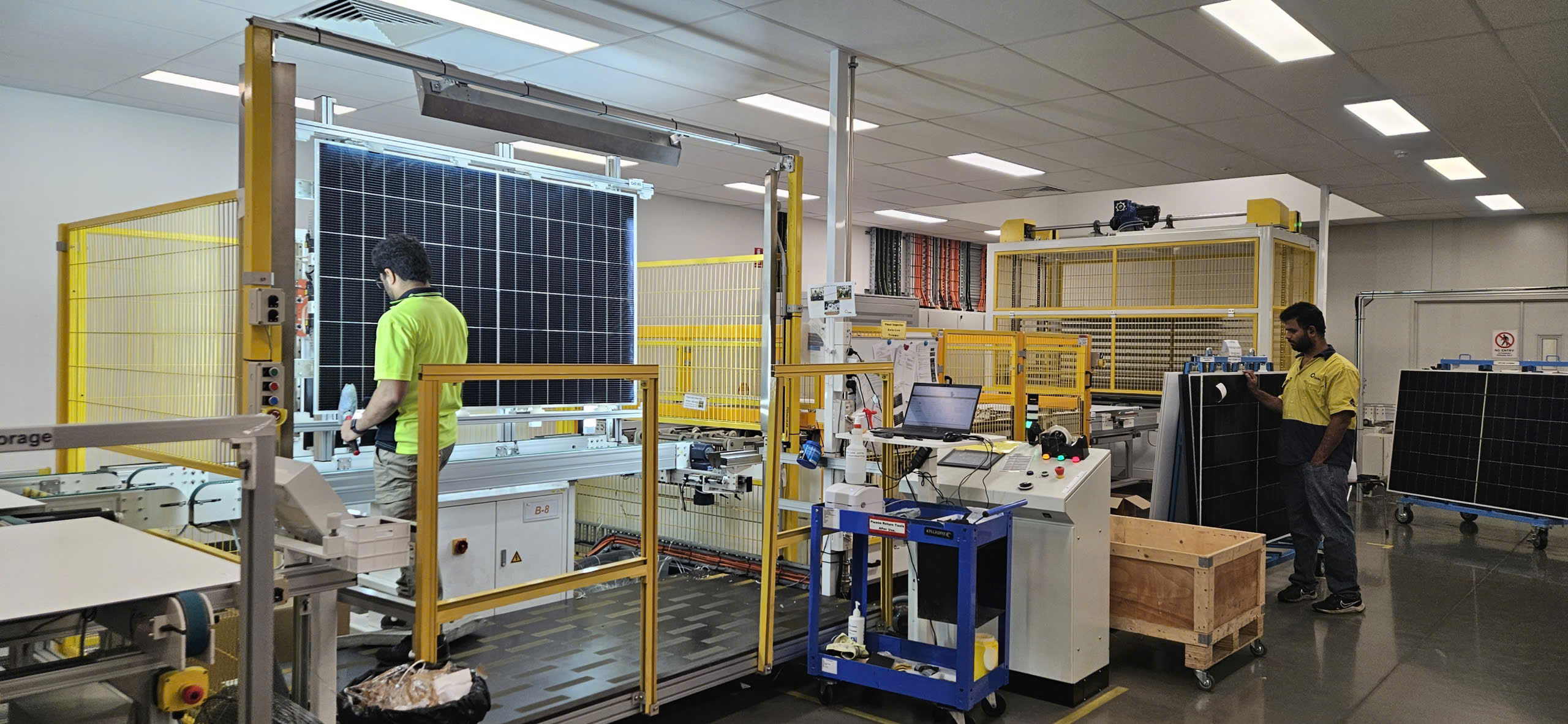

Me and my colleagues in factory. (for illustration only — not related to the project).

At the current stage, the project has successfully completed the model building and the development of a prototype UI for testing and evaluation. The trained model is able to detect common defects in solar panels from EL images, and the prototype interface allows adjusting confidence thresholds (ranging from 0.5 to 0.9) to analyse detection performance. These initial results demonstrate the feasibility of applying machine learning to support defect inspection in production.

The project continues to progress, with further improvements expected over time. Future work will focus on enhancing the model, expanding the dataset, and completing the full implementation pipeline to achieve better performance and reliability.